- Thomas Hermann, Gerold Baier, Ulrich Stephani,

Helge Ritter (2006)

In Stockman, Tony (Ed.) Proceedings of the International Conference on Auditory Display (ICAD 2006), p. 158--163, International Community for Auditory Display (ICAD), Department of Computer Science, Queen Mary, University of London, London, U.K.

[BibTeX Entry]

[Download PDF]

Summary

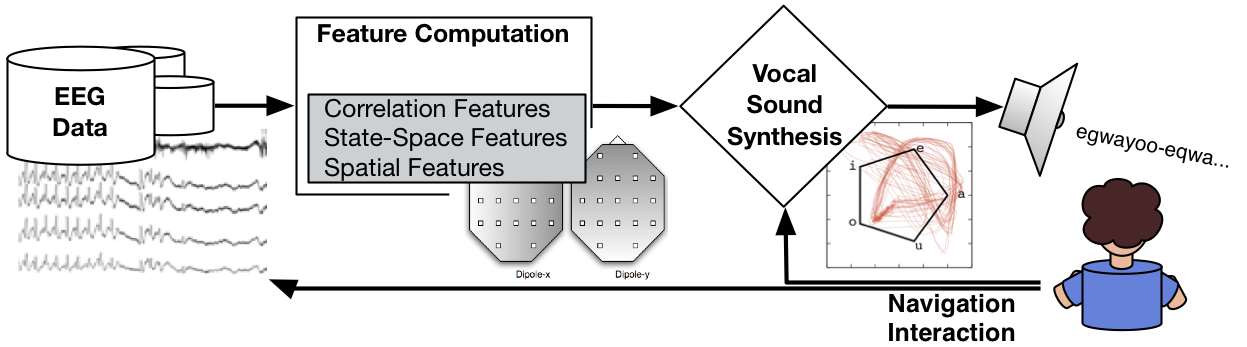

We introduce a novel approach in EEG data sonification for process monitoring and exploratory as well as comparative data analysis. The approach uses an excitory/articulatory speech model and a particularly selected parameter mapping to obtain auditory gestalts (or auditory objects) that correspond to features in the multivariate signals. The sonification is adaptable to patient-specific data patterns, so that only characteristic deviations from background behavior (pathologic features) are involved in the sonification rendering. Thus the approach combines data mining techniques and case-dependent sonification design to give an application-specific solution with high potential for clinical use. We explain the sonification technique in detail and present sound examples from clinical data sets.

We introduce a novel approach in EEG data sonification for process monitoring and exploratory as well as comparative data analysis. The approach uses an excitory/articulatory speech model and a particularly selected parameter mapping to obtain auditory gestalts (or auditory objects) that correspond to features in the multivariate signals. The sonification is adaptable to patient-specific data patterns, so that only characteristic deviations from background behavior (pathologic features) are involved in the sonification rendering. Thus the approach combines data mining techniques and case-dependent sonification design to give an application-specific solution with high potential for clinical use. We explain the sonification technique in detail and present sound examples from clinical data sets.

- Example S1: Patient 1, transition to epileptic activity, dt = 0.005

S1 (mp3, 184k) - Example S2: Patient 1, transition to epileptic activity, dt = 0.010

S2 (mp3, 356k) - Example S3: Patient 1, transition to epileptic activity, dt = 0.020

S3 (mp3, 668k) - Example S4: Patient 2, transition to epileptic activity, dt = 0.005

S4 (mp3, 404k) - Example S5: Patient 2, transition to epileptic activity, dt = 0.010

S5 (mp3, 820k) - Example S6: Patient 2, transition to epileptic activity, dt = 0.029

S6 (mp3, 2.2M) - Example S7: Patient 2, spike series in detail, dt=0.059

S7 (mp3, 572k) - Example S8: Patient 3, as reference, dt=0.005

S8 (mp3, 308k) - Example S9: Patient 3, as reference, dt=0.010

S9 (mp3, 624k) - Example S1: Patient 1, absence EEG

S1 (mp3, 52k) - Example S2: Patient 2, absence EEG

S2 (mp3, 52k) - Example S3: Artefact EEG

S3 (mp3, 52k) - Example S4: Artefact EEG

S3 (mp3, 52k) Contact

Thomas Hermann

Vocal Sonification of Pathologic EEG Features

Sonification examples

Project: See this Sound (Linz)

The "Vocal Sonification of epileptic EEG" has been selected for presentation within the project "See this Sound", Ludwig Boltzmann Institute, Linz, 2009. For that purpose we have selected some fresh sound examples. The following abstract gives an overview for readers that are new to sonification.

Vocal Sonification of epileptic EEG - Abstract

Sonification is the scientific method of representing data by sound. In case of medical data, sonification can be used to make pathologic changes in the human body audible. In the present case, epileptic activity recorded by EEG can be perceived as rhythmic vocal sound patterns. Thereby listening allows to understand and differentiate the dynamics of epileptic activity. Technically, the method first generates generic features from the raw multivariate EEG data so that it abstracts from the details for the recording situation. These features are then mapped to parameters of an articulatory speech synthesizer that creates vowel sounds. The pathologic features of the EEG data thereby lead to transitions between vowels, resulting in audible vocal shapes such as rhythms of a 'pathologic speech'. An important motivation for using vocal sonifications is that humans (a) are highly sensitive and trained to process, memorize, and recognize speech-like signals and (b) can easily reproduce similar sounds using our own vocal tract,. The accompaning figure depicts the data flow from recorded EEG data to the vocal sonification. The user can interactively select data segments and adjust parameters. Details to the sonifications are provided at this website.